How We Cut AI Costs by 80% Running Ollama on Azure - A Production Story

- Pankaj Naik

- Mar 25

- 4 min read

Updated: Mar 26

The Problem We Were Trying to Solve

At PANTA Flows, we build workflow automation tools. Like most modern AI SaaS products, we started exploring ways to embed AI into our product - specifically for summarization and small language tasks that would help our users make faster decisions.

The obvious choice? OpenAI or Claude.

But when we ran the numbers, the cost picture wasn't pretty. For our expected traffic of around 200 requests per day - modest by any standard - we were looking at €300–500 per month for GPT-4 level models. And here's the thing: we didn't need GPT-4. We needed something that could reliably summarize a document or extract key points. A 3 billion parameter model would do just fine.

That's when we started asking a different question:

"What if we just ran the model ourselves?"

Why Self-Hosted LLM Made Sense for Us

We weren't building a chatbot. We weren't doing complex reasoning. Our use cases were:

Summarizing workflow descriptions

Creating scenes from scripts and summarising files

Small classification tasks

For these tasks, a quantized small model running on CPU is not just acceptable, it's perfectly adequate. The key insight was that we didn't need GPT-4 intelligence, we needed GPT-4 availability.

Self-hosting gave us:

Predictable costs - one VM price per month, no per-token billing

Data privacy - nothing leaves our infrastructure

Speed - sub-2 second responses for warm requests

Control - we own the stack

Choosing the Right Model

After evaluating several options, we landed on Qwen2.5:3b - a 1.9GB quantized model (Q4_K_M)

Why this model?

Architecture Decision: Simple Wins

Before we get into the technical setup, I want to talk about what we deliberately chose NOT to build.

Early in the planning phase, we considered:

Docker containers with auto-scaling

Azure Container Apps

Message queues (Azure Service Bus, Redis)

API gateway wrappers

We rejected all of it. Here's why:

For 200 requests per day that's roughly one request every 7 minutes on average - adding Kubernetes, containers and message queues would be solving problems we don't have yet. The engineering overhead alone would outweigh any benefit.

Our final architecture is deliberately boring:

No queues. No containers. No orchestration. Just a VM running a process.

Infrastructure Setup

The VM

We chose Azure Standard_D2as_v4 ; 2 vCPU, 8GB RAM, running Ubuntu 22.04 LTS.

Memory breakdown:

Tight but perfectly fine for sequential inference at low traffic. Total cost: €65.78/month.

For disk, we kept the default 30GB OS disk but switched from Premium SSD to Standard SSD, saving ~€7-10/month. Since Ollama loads the model into RAM on first request, disk speed only matters at cold start. The difference between Premium and Standard SSD is maybe 3 seconds on startup. Not worth the premium.

VNet Planning: The Part We Almost Got Wrong

This is where most guides skip the important detail. We have three environments: dev, stage, and prod. Each runs as a separate Azure App Service.

Our initial instinct was to just open port 11434 on the VM and call it a day. That would have been a security disaster as Ollama has no authentication by default. Anyone with our VM's public IP could run inference and rack up compute costs.

Instead, we designed a proper VNet subnet structure:

Each App Service connects to its dedicated subnet via VNet Integration. All subnets are in the same VNet, so they can communicate privately. Ollama never needs a public port open.

The result: port 11434 is completely invisible to the internet. Only our backend services can reach it and only via private IP.

Deploying Ollama

Installation

Simple. The install script handles everything including creating a systemd service.

Configuring the Service

The default Ollama installation binds to 127.0.0.1 localhost only. Since our App Services need to reach it over the VNet, we need it listening on all interfaces. We also add queue settings to handle concurrent requests gracefully.

Our final /etc/systemd/system/ollama.service:

Breaking down the environmental variables:

Variable | Value | Reason |

OLLAMA_HOST | 0.0.0.0:11434 | Accept VNet traffic |

OLLAMA_NUM_PARALLEL | 1 | Safe for 2 vCPU; no thrashing |

OLLAMA_MAX_QUEUE | 5 | Buffer for traffic spikes |

OLLAMA_MAX_LOADED_MODELS | 1 | Keep model warm in memory |

Why We Didn't Need Azure Service Bus

This is the question we get asked most often: "Why not use a proper queue?"

Ollama has a built-in queue. For our traffic pattern, it works like this:

Azure Service Bus costs €10+/month and adds operational complexity. Ollama's built-in queue costs €0 and requires three lines of config. The math is obvious.

Connecting the Backend

Backend Integration Code

Our environment strategy is clean:

Performance in Production

Real numbers from our deployment:

For a startup running low-traffic AI workloads, the performance is more than adequate.

The biggest win was the cost reduction:

For an early-stage product, that's a meaningful difference.

When to Scale Beyond This

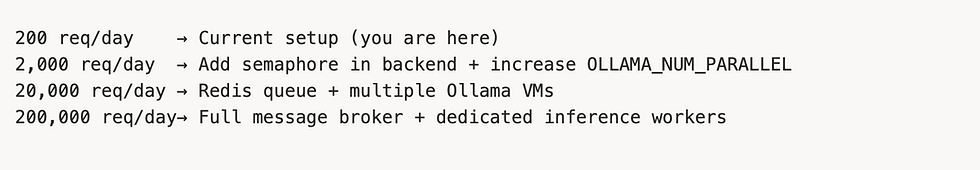

Our current setup handles 200 requests/day comfortably. Here's when we'd consider scaling:

The beauty of this architecture is that scaling is incremental. We don't need to redesign anything, just add layers as traffic grows.

Key Takeaways

Self-hosted LLM is viable for small-medium workloads - don't assume you need managed AI services

Simple architecture beats clever architecture - a VM and a process is often enough

VNet integration is the right security model - never expose Ollama publicly

Ollama's built-in queue is underrated - you don't need Redis for 200 req/day

Plan your network topology first - subnet structure decisions are hard to change later

Cold start is solvable - OLLAMA_MAX_LOADED_MODELS=1 keeps the model warm

What's Next

We are planning to:

Add Azure Monitor alerts on VM CPU/memory

Implement keep-warm cron job to eliminate cold starts

Evaluate larger models (7B) as we upgrade the VM

Add streaming responses for better response

If you are building a similar setup or have questions about our approach, feel free to reach out.